Everyone believes that smoothness in online activity will always be positive. You register in one click, pay in one tap, get quick suggestions, infinite scrolling, prompt responses, rapid rewards, and so on. Now imagine, if the same platform adds a delay, requires further validation, the same platform adds a delay, requires further validation or displays some information that has to be read in detail, then it can come off to the user as something that is creating hassle for them. But there are times when having some slowness in the digital space can actually turn out to be something good because it would help users think about whatever it is that they are doing, and help the platform have adequate time to act on something critical. Sounds interesting?

Where does it make more sense? Such concerns are particularly relevant to regulated online environments where user actions may shift rapidly. It is a major reason why the conversation around data-driven compliance is becoming more practical. It is about using behavior signals to decide when a platform should gently slow the user down.

Contents

Why speed can create blind spots

The reason people like speed is that it removes effort. Suppose a user wants to deposit money, join a game, send a message, accept a bonus, or make a trade, and the platform does so in a few seconds. Look at this from a business point of view, and you will say it is effective. From a social computing perspective, it creates a question: What happens when a person is acting emotionally?

The digital world is amazing, where many risky online actions happen during short emotional windows. Someone may be frustrated after losing money. Someone may be angry in a comment thread. Someone may be excited by a reward and stop thinking clearly about limits. A user might be feeling fatigue, distraction, or simply following a crowd without considering any specifics.

It is easy to say that a platform that makes every pause can make these moments stronger. It gives the user no space between impulse and action. That is useful for conversion metrics, yet it can create problems for safety, trust, and compliance.

Digital friction is actually one way to create a small pause. There is no need to implement it as a penalty. Instead, you should consider a reminder message, an additional confirmation screen, or a session timer. Or it can be a little pause before finishing an operation that is considered to be risky.

What useful friction looks like

Suppose you are playing a game, and you get some friction that has no clear reason. How would you feel? Obviously not happy. On the other hand, quality friction occurs when needed and clearly communicates its reasons using concise wording. It should feel more like assistance in the process, rather than an obstacle.

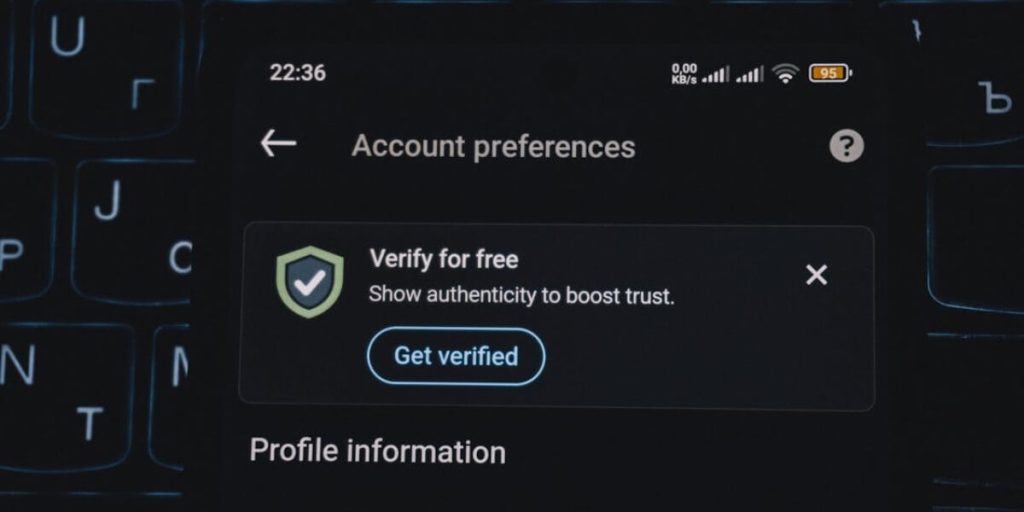

Let’s understand it practically in detail. Let’s take a look at a situation where a game that has spent several hours online tries to raise a deposit cap. In that case, the platform might include one additional confirmation step. Now, take the example of a community-focused app that may provide its users with a reminder screen prior to sending another aggressive message. In the same way, if a user tried to change their payout options on a marketplace following a suspicious login, some additional verification might be necessary.

It is crucial to remember that this feature does not always have to be loud. The key is to create conditions when a user gets an opportunity to think twice.

Useful friction can include:

- A short cooling-off delay before sensitive changes

- A reminder after long sessions or repeated actions

- A plain-language explanation before financial decisions

- A confirmation step when behavior changes suddenly

- A limit review screen after intense activity

- Extra verification after unusual account access

- A support link shown during high-risk moments

The key here is context. A platform should avoid treating every user as a problem. It should respond to patterns that suggest stress, fraud, confusion, or loss of control.

The role of behavioral signals

The tracking of online platforms is not a secret. The digital platform already collects many signals. They understand login frequency, session duration, feature usage, decision-making speed, and abandonment rates. The task now lies in how to implement these insights ethically.

A single signal is rarely enough. A late-night login may be normal. A large payment may be planned. A long session may happen because someone has free time. Risk becomes clearer when several signals appear together.

For example, imagine this pattern: a user logs in after midnight, makes several deposits, switches rapidly between products, ignores a limit reminder, and contacts support about a failed payment. Any one of these actions could be harmless. Together, they may suggest the person is in a stressed state.

That is where a risk model can help. It can surface cases that deserve attention. Still, the platform should be careful with labels. The design model must educate the individual without being overbearing, and therefore, human judgment, policies, and testing are needed in order to avoid being unethical or invasive.

Why tone matters

There is no doubt that politeness has a major role in online communications. A safety message can succeed or fail because of tone. Friction is more likely to be accepted by users when it is framed politely. Users are less likely to accept friction when it is presented as accusatory.

Suppose someone is using a platform and a message like “Your behavior is suspicious” creates tension. A message like “You have made several quick changes today. Please review this before continuing” feels more neutral. The second version gives information and asks for attention. It shows how much the tone matters.

The tone is especially important in communities and gaming platforms where users already feel emotionally involved. A cold warning can make someone defensive. A calm prompt can create a moment of awareness.

Good intervention language is short, specific, and practical. It avoids shame. It avoids vague threats. It makes sure that the user understands what is happening at any given time and what his/her next move is.

The future of safer digital experiences

The internet has spent years removing friction. Now, many platforms are learning where friction should return. The goal is not to slow everything down. The goal is to understand which actions deserve an extra moment.

This shift changes how we think about platform design. Safety is no longer only a legal requirement hidden in policy pages. It becomes part of the user journey. It appears in timing, language, limits, verification, and support.

From a user perspective, this could lead to safer and more trusted digital spaces. In terms of platform, this will result in no negative repercussions, abuse prevention, and strong relationships. In terms of research, it gives us something very important to ponder over – How do we create an ethical digital environment that is autonomous yet knows when to intervene?

An optimal digital experience is not necessarily the fastest one.

As a platform focused on Social Computing and human-computer interaction, social computing journal aims to discuss how the digital world is impacting users. If you like this article, please share it with your friends.

.